Some of these cloud services are serverless, which means these services are executed by demand and the cloud provider takes care of the server infrastructure on behalf of the customer. In many of these services, you can increase the memory and processing capacity just by clicking some buttons It’s usually very easy to escalate the capabilities of the cloud service you are using.There are a lot of engineers working to make sure these services don’t have any security breaches.There are several advantages of using a cloud solution, for example: Some famous examples of cloud providers are Amazon AWS, Google Cloud, and Microsoft Azure. Cloud computing is making services like hosting and storage, available over the internet. In this blog post, we are going to set the web scraper script on the cloud. Print(header_element.text) # Print found element text contentĭriver.quit() # Close browser How to use the Web Scraper script? Header_element = driver.find_element(By.CSS_SELECTOR, 'h1') # Find H1 element Here’s an example of a Web Scraper that opens a browser and gets the header element of a page and prints its header content:įrom import Byĭriver = webdriver.Chrome() # Open browser The driver version needs to be compatible with the installed browser version This driver provides an API so the selenium package can manage the browser. This technique requires a driver to communicate with the browser installed (e.g.

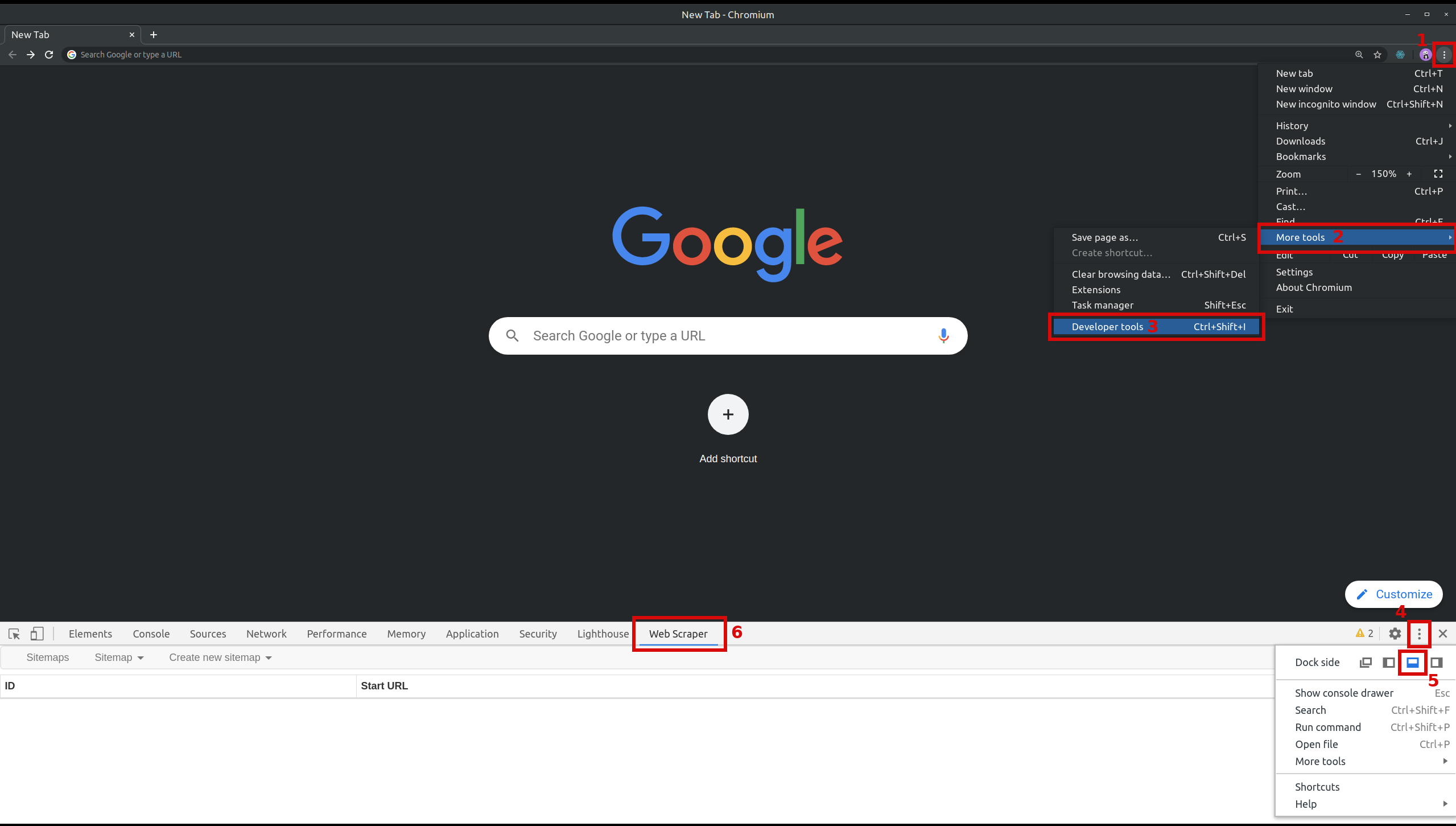

One famous package used for this is Selenium. So with DOM parsing, you are capable of opening a page, clicking buttons, filling forms, running your scripts on the page, and then getting the information you want. This technique uses a browser controlled by automation software to interact and retrieve the information on the website. Retrieved from (HTML_parser)#Code_example Soup = BeautifulSoup(response, 'html.parser') In this script, we make a request to Wikipedia’s main page, load its response into a BeautifulSoup instance, then use it to get all of the anchor elements of the page, and finally iterate through the elements found and print each one of its HREF properties: In this case, instead of dealing with the raw HTML text, the HTML is parsed into elements, making it easier to find the content you want to scrape.īelow is a Python example using the package Beautiful Soup. Below is a screenshot where we combine the Unix commands curl and grep respectively, get the website HTML, and find the text inside the quotes, which is the title tag in this case. This technique consists of finding a text pattern match within the website-generated HTML. Every programmer has already web scraped from stack overflow ^^ To use it, you only need to select the text you want to get, copy it, and paste it wherever you want to save it. This technique is very simple and manual. However, if there is no convenient API to access the data you need and the website you want to scrape is unlikely to have frequent interface changes, Web Scraping is the way to go! List of some web scraping techniques: Web scraping often requires more processing capacity and is also very likely to break if the website changes its display, or even if an element changes its identification parameters. You should consider web scraping as a last-resort alternative because it’s usually more beneficial to consume APIs (application programming interfaces) which are interfaces made to get the exact information you want. In this blog post, we will focus on Web Scraping, which is a type of data scraping that occurs when the data being retrieved is from a website. Data Scrapingĭata Scraping is retrieving information generated from another program. Or, if you are not into reading definitions and just want to go straight to the point, skip to “The codebase” section of this blog post. Let’s talk about these technologies and go through a Python example that solves this mystery. Besides, it’s awesome to see a browser opening, clicking on buttons, and filling out forms by itself.ĪWS Lambda Functions is an easy and low-price service to deploy scripts to the cloud, but how can we have a chrome browser installed in an AWS lambda function? If you don’t have a good way to retrieve information from a website, data scraping is the way to go. How are we going to deploy it to AWS Lambda?.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed